OpenVLA

$ 0

Open-source 7B VLA trained on Open X-Embodiment dataset; widely adopted open baseline for robot manipulation

Available on backorder

Brain Score

3

3Specifications and details:

| Nationality | US |

|---|---|

| Website | https://openvla.github.io |

| Model type | Foundation Model |

| Manufacturer | Stanford / UC Berkeley |

| Release date | 2024 |

Description

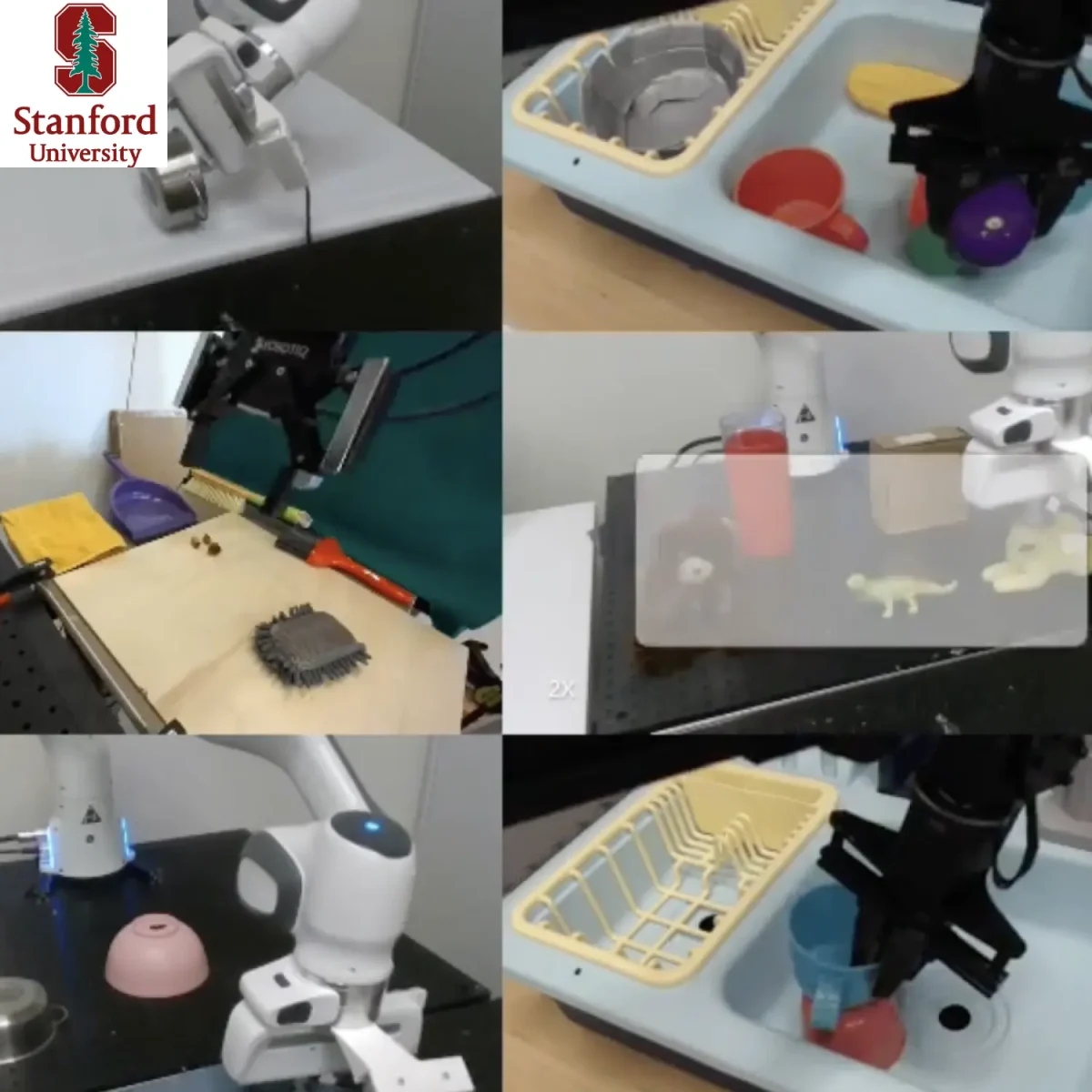

OpenVLA provides a practical and accessible foundation model by linking vision, language, and action in a single system. Instead of relying on rigid task pipelines, it interprets visual input and translates it directly into coordinated actions. Consequently, robots adapt naturally to different environments and situations. Moreover, its open-source design makes it widely usable, allowing developers to build, test, and refine capabilities without heavy restrictions.

In addition, OpenVLA benefits from large-scale training across diverse robots and settings. Therefore, learned behaviors transfer effectively between different environments with minimal adjustment. Its flexibility enables robots to handle both simple and complex tasks with consistent performance. Because it serves as a baseline model, researchers use it to benchmark and improve new approaches. As a result, continues to drive progress toward general-purpose robotic intelligence.

New! 2026 Humanoid

Robot Market Report

198 pages of exclusive insight from global robotics experts — uncover funding trends, technology challenges, leading manufacturers, supply chain shifts, and surveys and forecasts on future humanoid applications.

now Google DeepMind

Contact Humanoid.guide

Website: https://openvla.github.io