Humanoid Training Data Survey

The Data Gold Rush: Unlocking the Next Era of Humanoid Intelligence

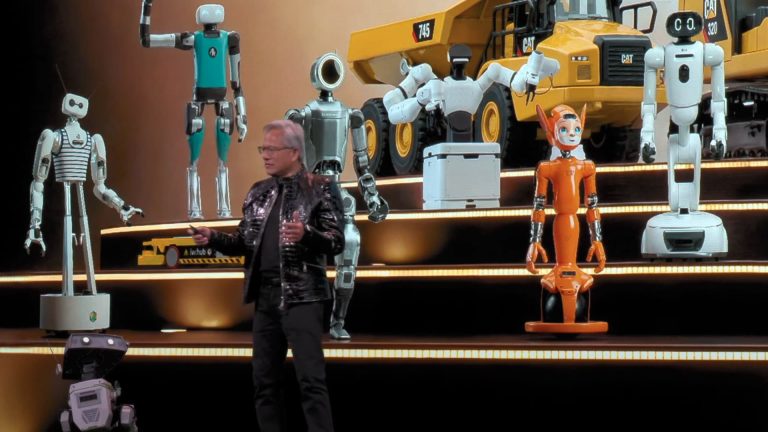

The global humanoid robotics industry has reached a pivotal juncture. As development shifts toward AI-first systems, the availability of high-quality humanoid training data has become the primary bottleneck to commercial viability. While the past decade focused on mechanical stability and actuator performance, 2026 is defined by a hardware plateau — intelligence and adaptability now matter more than raw mechanics.

Humanoid Training Data

Market Survey Result 2026

See what the market needs next.

The Intelligence Transition

Modern humanoids are increasingly trained through demonstrations and large-scale foundation models rather than rigid, hand-coded scripts. However, a significant data gap remains. Compared to the trillions of tokens used to train large language models, real-world humanoid training data is still scarce, fragmented, and difficult to standardize. This shortage is one of the key constraints preventing general-purpose autonomy from scaling beyond controlled environments.

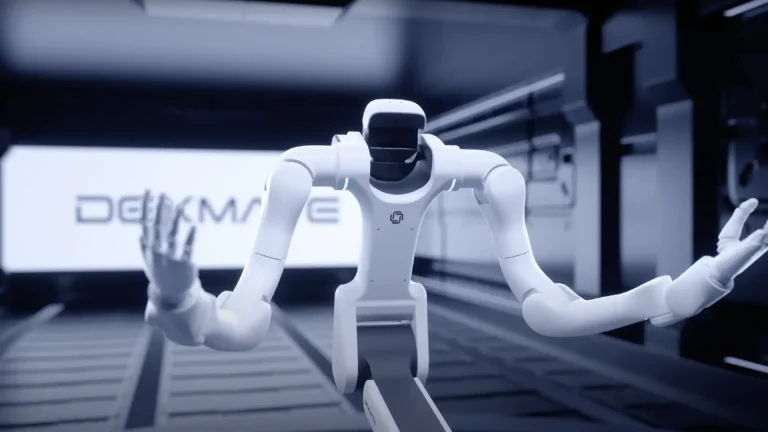

The challenge is visible to us all by the “sim-to-real” gap. Humanoids are highly sensitive to physical variables like friction and timing; errors that are negligible in simulation can lead to total instability in the real world. Consequently, the industry is racing to acquire high-fidelity data that ground AI models in physical reality.

Mapping Market Requirements

Beyond vision, the industry is increasingly focused on the “manipulation bottleneck”. Solving complex tasks requires tactile and force-torque data to give robots a sense of touch.

To help standardize these requirements, the Humanoid Guide is running a landmark survey to map the specific data needs of the industry. The survey gathers input such as required video resolutions (from 480p to 4K), sensory modalities—such as touch-sensitive gloves—and the pricing models that will support the next generation of datasets.

Industry stakeholders are invited to contribute to this research and help define the data standards of tomorrow.