OpenClaw Connects AI Agents to Unitree G1 Humanoid Robot

An open source AI agent framework originally built for desktop automation is now being applied to humanoid robotics. OpenClaw, an agentic interface that allows large language models to execute multi step tasks, has been integrated with the Unitree G1 humanoid robot, enabling command and feedback loops through common messaging platforms.

2026 Humanoid Robot Market Report

160 pages of exclusive insight from global robotics experts – uncover funding trends, technology challenges, leading manufacturers, supply chain shifts, and surveys and forecasts on future humanoid applications.

Featuring insights from

Aaron Saunders, Former CTO of

Boston Dynamics,

now Google DeepMind

2026 Humanoid Robot Market Report

160 pages of exclusive insight from global robotics experts – uncover funding trends, technology challenges, leading manufacturers, supply chain shifts, and surveys and forecasts on future humanoid applications.

From chat interface to physical control

OpenClaw acts as a gateway between user messages and executable tools. It supports large language models including Claude, DeepSeek, and OpenAI GPT variants, and exposes toolchains that allow these models to trigger real world actions. Through a ROS2 bridge known as RosClaw, OpenClaw can interface with robotic software stacks using rosbridge_server and DDS communication.

The resulting architecture links messaging clients such as Telegram, Discord, Signal, or WhatsApp to ROS2 based robots. Commands sent as plain text are interpreted by the agent, translated into robot specific instructions, and executed through navigation and motion planning frameworks such as Nav2 and MoveIt2.

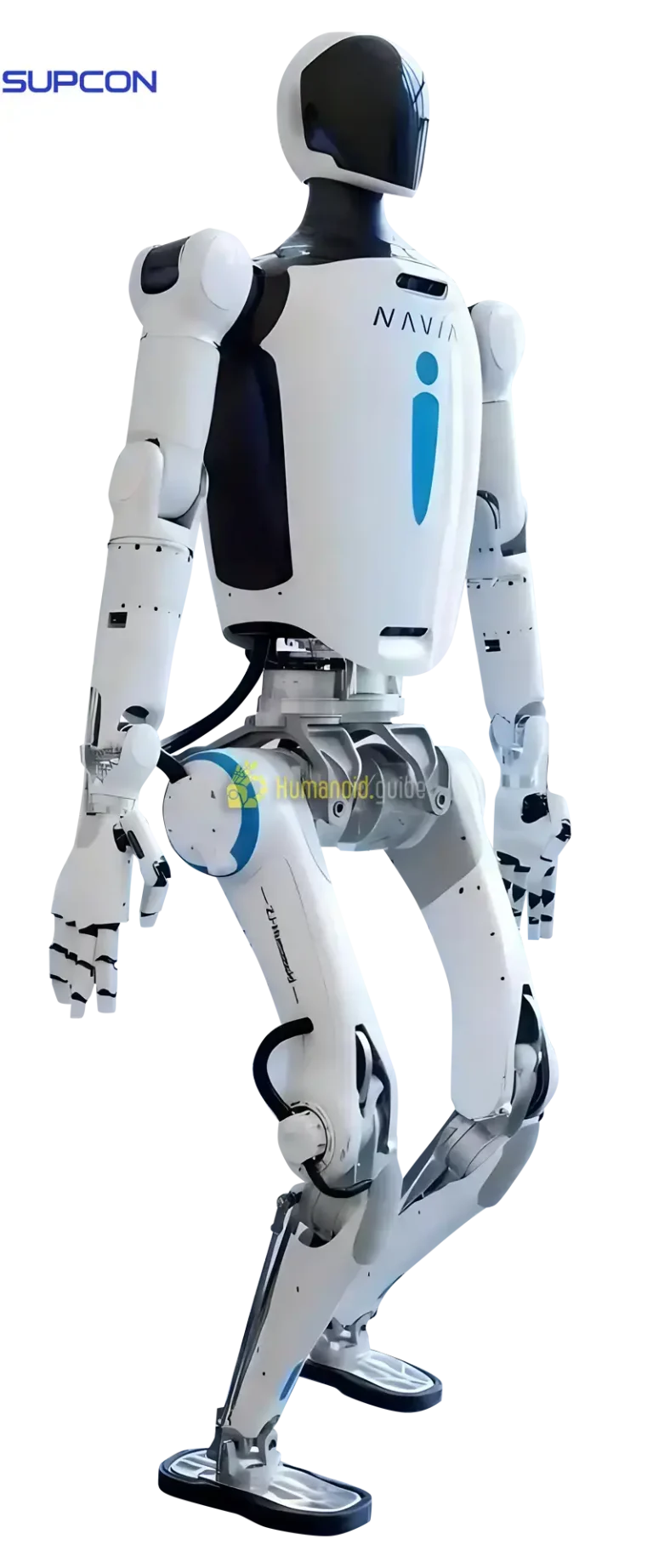

Text based control of the Unitree G1

The Unitree G1 is a bipedal humanoid robot priced at 16000 dollars and equipped with 3D LiDAR, depth cameras, and articulated joints designed for dynamic mobility. A newly released unitree robot skill for OpenClaw enables developers to control the G1 through instant messaging rather than through a traditional graphical interface or vendor specific SDK.

Users can issue simple motion commands such as forward 1m or turn left 45. These are parsed by the agent and transmitted to the robot for execution. The integration is bidirectional. RGB D frames from the robot Insight9 stereo camera can be retrieved and sent back to the chat interface for remote inspection and situational awareness.

With optional TinyNav integration, OpenClaw can also handle autonomous path planning and obstacle avoidance. This shifts the interaction model from low level teleoperation to higher level task delegation, where the operator specifies intent and the software stack manages execution details.

Beyond humanoids: hands and arms

Although the Unitree G1 integration is the most visible humanoid example, OpenClaw is also being connected to modular and industrial hardware. One project linked an OpenClaw agent to a 3D printed 16 joint Aero Hand Open. Using a USB camera, the system verified its own gestures, calibrated firmware, and executed simple hand poses while narrating actions through a chat interface.

Another integration connects OpenClaw to a NERO 7 axis robotic arm via the pyAgxArm SDK. Instead of manually coding kinematics routines, users describe desired movements in natural language. The agent generates and runs the corresponding Python scripts to drive the arm.

While these systems are not humanoids in full body form, they demonstrate the same pattern that is now being applied to bipedal platforms: language driven intent mapped to ROS2 compatible control stacks.

Enterprise workflows and fleet orchestration

OpenClaw is also being positioned within larger automation frameworks. The Peaq Robotics SDK provides workflows that make robots OpenClaw ready, allowing shared agents to distribute skills and orchestrate multi machine processes. The concept includes secure connectivity, reusable behaviors, and coordination across fleets.

Healthcare logistics has been identified as a potential application area, where automated delivery robots in hospitals could be managed through an agent that integrates compliance constraints and workflow logic. In this framing, the agent functions as a coordination layer above the physical platforms.

Implications for humanoid robotics

For humanoid developers and operators, the integration illustrates a broader shift in interface design. Instead of building bespoke dashboards or tightly coupled control software, teams can leverage general purpose language models as supervisory layers. Messaging based control lowers the barrier to experimentation and remote operation, particularly in early stage deployments.

However, delegating hardware control to autonomous agents raises governance and cybersecurity considerations. Granting an AI system authority over physical actuators and identity linked credentials introduces risks that must be mitigated through access control, safety constraints, and auditability within the ROS2 stack.

The Unitree G1 example shows how quickly humanoid platforms can become endpoints for general purpose AI agents. As agent frameworks mature, the distinction between chat based reasoning and embodied execution continues to narrow, with humanoid robots emerging as one of the most visible testbeds for this convergence.

Source: evoailabs.medium.com